Thanks to Abby Sarfas, Ali Ladak, Elise Bohan, Jacy Reese Anthis, Thomas Moynihan, and Joshua Gellers for feedback. This report is also available at https://arxiv.org/abs/2208.04714.

Abstract

This report documents the history of research on AI rights and other moral consideration of artificial entities. It highlights key intellectual influences on this literature as well as research and academic discussion addressing the topic more directly. We find that researchers addressing AI rights have often seemed to be unaware of the work of colleagues whose interests overlap with their own. Academic interest in this topic has grown substantially in recent years; this reflects wider trends in academic research, but it seems that certain influential publications, the gradual, accumulating ubiquity of AI and robotic technology, and relevant news events may all have encouraged increased academic interest in this specific topic. We suggest four levers that, if pulled on in the future, might increase interest further: the adoption of publication strategies similar to those of the most successful previous contributors; increased engagement with adjacent academic fields and debates; the creation of specialized journals, conferences, and research institutions; and more exploration of legal rights for artificial entities.

Introduction

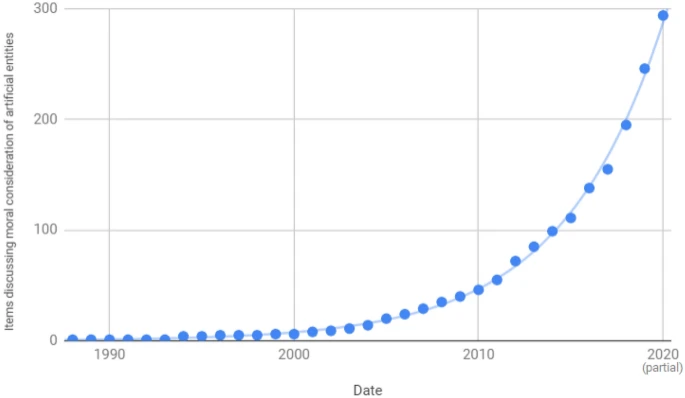

Can, and should, AIs have rights? In the past decade or so, these questions have become the focus of legislative proposals, media articles, and public debate (see Harris & Anthis, 2021), as well as academic books (Gunkel, 2018; Gellers, 2020) and journal publications (e.g. Coeckelbergh, 2010; Robertson, 2014). Academic discussion of AI rights, robot rights, and the moral consideration of artificial entities more broadly (sometimes collectively referred to here simply as “AI rights,” for brevity) has grown exponentially (Harris & Anthis, 2021; see Figure 1).[1] This report presents a chronology of that growth and its contributing factors, discusses the causes of increased academic interest in the topic, and then reviews possible lessons for stakeholders seeking to increase interest further.

Figure 1: Cumulative total of academic publications on the moral consideration of artificial entities, by date of publication (Harris & Anthis, 2021)

Researchers approach the topic with different motivations, influences, and methodologies, often seemingly unaware of the work of other academics whose interests overlap with their own. This report seeks to contextualize and connect the relevant streams of research in order to encourage further study. This is especially important because granting sentient AI moral consideration, such as protection in society’s laws or social norms, may be important for preventing large-scale suffering or other serious wrongs in the future (Anthis & Paez, 2021), and academic field-building is a tractable stepping stone towards this form of moral circle expansion (Harris, 2021).

Methodology

We began with a review of the publications identified by Harris and Anthis’ (2021) literature review, which systematically searched for articles via certain keywords relevant to AI rights and other moral consideration of artificial entities. The reference lists of important included publications were then reviewed, as were the lists of items that cited those publications, primarily using the “Cited by…” button provided on Google Scholar. The titles — and sometimes abstracts — of identified items were reviewed to decide whether further reading or a mention in the text was warranted.

Unlike Harris and Anthis (2021), this report was not a formal, quantitative literature review. There were no strict inclusion or exclusion criteria, but an item was more likely to be read and discussed in detail if it:

- was in an academic format (e.g. journal article, conference paper, or edited book);

- addressed the moral consideration of artificial entities explicitly and in some depth;

- appeared to have arisen independently of other included items (e.g. did not reference previous relevant items or added a different perspective);

- was written in English; and

- was written in the 20th or 21st century.

The results are presented in a thematic narrative, roughly in chronological order, with categorizations emerging during the analysis rather than being fit to a predetermined framework. We focus on implications and hypotheses generated during the analysis, rather than assessing the presence or absence of factors identified as potentially important for the emergence of “scientific/intellectual movements” by previous studies (e.g. Frickel & Gross, 2005; Animal Ethics, 2021).

The thematic narrative is supplemented by keyword searches through PDFs of the publications identified by Harris and Anthis’ (2021) systematic searches that met their inclusion criteria, where the full texts could be identified (270 of 294 items, i.e. 92%). As well as being broken out into tables in the relevant sections below, the full results of these searches and the list of included items are provided in a separate spreadsheet.[2] The keywords were chosen based on expectations about which would be most likely to generate meaningful results,[3] but individual publications returned by the searches were not manually checked to ensure that they actually mentioned the author, item, or idea referred to by the keyword.

Results

Figure 2 presents a summary of the different ideas and research identified as having contributed to the nascent research field around AI rights and other moral consideration of artificial entities, each of which will be explored in more depth in the subsections below.

Figure 2: A summary chronology of contributions to academic discussion of AI rights and other moral consideration of artificial entities

Pre-20th century | Mid-20th century | 1970s | 1980s | 1990s | Early 2000s | Late | 2010s and 2020s |

Synthesis | |||||||

Moral and social psych | |||||||

Social-relational ethics | |||||||

HCI and HRI | |||||||

Machine ethics and roboethics | |||||||

Floridi’s information ethics | |||||||

Transhumanism, EA, and longtermism | |||||||

Legal rights for artificial entities | |||||||

Animal ethics | |||||||

Environmental ethics | |||||||

Artificial life and consciousness | |||||||

Science fiction | |||||||

Science fiction

“The notion of robot rights,” as Seo-Young Chu (2010, p. 215) points out, “is as old as is the word ‘robot’ itself. Etymologically the word ‘robot’ comes from the Czech word ‘robota,’ which means ‘forced labor.’ In Karel Čapek’s 1921 play R.U.R. [Rossum's Universal Robots], which is widely credited with introducing the term ‘robot,’ a ‘Humanity League’ decries the exploitation of robots slaves — ’they are to be dealt with like human beings,’ one reformer declares — and the robots themselves eventually stage a massive revolt against their human makers.” The list of science fiction or mythological mentions of robots or other intelligent artificial entities is extensive and long predates R.U.R., including numerous stories from Greek and Roman antiquity with automata and sculptures that came to life (Wikipedia, 2021).

Even some of the earliest academic publications explicitly addressing the moral consideration of artificial entities (e.g. Putnam, 1964; Lehman-Wilzig, 1981) set themselves against the backdrop of plentiful science fiction treatments of the topic. Petersen’s (2007) exploration of “the ethics of robot servitude” presents the topic as “natural and engaging… given the prevalence of robot servants in pop culture.”[4] Some works of fiction have become especially widely referenced in the academic literature that has developed around the moral consideration of artificial entities (see Table 1).

Table 1: Science fiction keyword searches

Keyword | Items mentioning | % of items |

"Science fiction" | 101 | 37.4% |

Asimov | 71 | 26.3% |

Frankenstein | 30 | 11.1% |

“Star Trek” | 23 | 8.5% |

R.U.R. | 19 | 7.0% |

Terminator | 18 | 6.7% |

“Ex Machina” | 17 | 6.3% |

"Star Wars" | 16 | 5.9% |

"A Space Odyssey" | 14 | 5.2% |

Westworld | 14 | 5.2% |

“The Matrix” | 13 | 4.8% |

“Real Humans” | 7 | 2.6% |

“Do Androids Dream of Electric Sheep” | 6 | 2.2% |

Bladerunner | 4 | 1.5% |

While some of these works explicitly address the moral consideration of artificial entities, such as R.U.R. and Real Humans, others usually just provide popular culture reference points for artificial entities, such as Star Wars, Star Trek, and Terminator. The list above refers to Western sci-fi, but sci-fi has likely been an influence on social and moral attitudes elsewhere, too (e.g. Krebs, 2006).

Artificial life and consciousness

Enlightenment philosophers and scientists’ exploration of consciousness and other morally relevant questions sometimes included reference to machines or automata. For example, Rene Descartes discussed the capacities and moral value of animals with reference to the physical processes of machines, and he explored whether or not the human mind could be mechanized (Harrison, 1992; Wheeler, 2008). Diderot (2012; first edition 1782) recorded in D’Alembert’s Dream, a series of philosophical dialogues, discussions of machines in the exploration of what might constitute “a unified system, on its own, with an awareness of its own unity.”

Some of the earliest mathematicians and scientists who worked on the development of computers and AI addressed the question of whether these entities could think or otherwise possess intelligence. Indeed, Alan Turing’s famous “Imitation Game” — in which an observer would seek to distinguish a machine from a human by asking them both questions — was designed to partly address this (Oppy & Dowe, 2021). This seems very closely adjacent to the questions of whether artificial entities might be able to feel emotions or have other conscious experiences, which were raised in academic discussion at least as early as 1949 (Oppy & Dowe, 2021).[5] Marvin Minsky, one of the researchers who proposed and attended the 1956 Dartmouth workshop (McCarthy et al., 2006), which is often credited as being a pivotal event in the foundation of the field of artificial intelligence (e.g. Nilsson, 2009), later argued that “some machines are already potentially more conscious than are people” (e.g. Minsky, 1991).[6]

In Dimensions of Mind, the proceedings of the third annual New York University Institute of Philosophy, Norbert Wiener (another pioneer of AI research) noted (1960) that the increasing complexity of machine programming “gives rise to certain questions of a quasi-moral and a quasi-human nature. We have to face the fundamental paradox of slavery. I do not refer to the cruelty of slavery, which we can neglect entirely for the moment as I do not suppose that we shall feel any moral responsibility for the welfare of the machine; I refer to the contradictions besetting slavery as to its effectiveness.”[7]

In the same proceedings, philosopher Michael Scriven (1960, pp. 139-42) critiqued the Turing Test (Turing’s “Imitation Game”) as “oversimple” for testing whether “a robot… had feelings,” but commented that such questions might nevertheless lead to “the prosecution of novel moral causes (Societies for the Prevention of Cruelty to Robots, etc.).” Scriven (1960) then proposed an alternative test, where after teaching a robot the English language and to not lie, we could ask it whether it has feelings or is “a person”; Scriven commented that “the first [robot] to answer ‘Yes’ will qualify” as a person.[8]

The philosopher Hilary Putnam briefly addressed the idea that machines might have “souls” in the same proceedings (1960).[9] Later, in a paper for a symposium called “Minds and Machines,” Putnam (1964) explored whether “robots” were “artificially created life.” Putnam (1964) opened by pointing out that, “[a]t least in the literature of science fiction, then, it is possible for a robot to be ‘conscious’; that means… to have feelings, thoughts, attitudes, and character traits.” The article’s exploration of the possibility of robot consciousness was motivated by concern for “how we should speak about humans and not with how we should speak about machines,”[10] but Putnam (1964) nevertheless commented that this philosophical question may become “the problem of the ‘civil rights of robots’... much faster than any of us now expect. Given the ever-accelerating rate of both technological and social change, it is entirely possible that robots will one day exist, and argue ‘we are alive; we are conscious!’”

The main focus of most of the contributors to the section of the proceedings on “The brain and the machine,” was on the capabilities of artificial entities, with philosopher and art critic Arthur Danto’s (1960) chapter the most explicitly focused on “consciousness.” Most also made some brief comments relevant to moral consideration. Sidney Hook’s (1960, p. 206) concluding “pragmatic note” to the section included the comment that, “[a] situation described by the Czech dramatist Karel Capek in his R.U.R. may someday come to pass.”

A number of other publications discussed the possibility of artificial consciousness in the 1960s (e.g. Thompson, 1965; Simon, 1969), and discussion has continued since then (e.g. Reggia, 2013; Kak, 2021).[11] Two of the most cited contributors to the discussion of artificial consciousness or sentience are the philosophers Daniel Dennett and John Searle. As well as being very widely cited in mainstream philosophy and cognitive science (e.g. Dennett has over 114,000 citations to date; Google Scholar, 2021g), they are often cited among the writers who explicitly discuss the moral consideration of artificial entities (see Table 3). Dennett’s arguments are often cited in support of claims that artificial consciousness is possible.[12] Some of his earliest writings touched on this topic, such as his (1971) argument that, “on occasion a purely physical [e.g. artificial] system can be so complex, and yet so organized, that we find it convenient, explanatory, pragmatically necessary for prediction, to treat it as if it had beliefs and desires and was rational,” because “it is much easier to decide whether a machine can be an Intentional system than it is to decide whether a machine can really think, or be conscious, or morally responsible.”[13] In contrast, Searle (1980) used a “Chinese room” thought experiment to argue that whereas a machine might appear to understand something, this does not mean that it actually understands it. The idea can be extended to consider whether a simulation “really is a mind” or merely a “model of the mind,” and whether one can really “create consciousness” (Searle, 2009).

The possibility of artificial consciousness, then, has long been a mainstream topic among technical AI researchers, philosophers, and cognitive scientists. As Versenyi (1974) noted, this discussion clearly has ethical implications, even if these have not always been referred to explicitly or at length. Indeed, explicit and detailed discussion of the moral consideration of artificial entities seems to have remained somewhat rare in the following decades.[14] However, research on artificial life and consciousness continued to inspire publications relevant to AI rights into the 21st century (e.g.Sullins, 2005; Torrance, 2007); sometimes discussion seems to have arisen without reference to many of the previous publications relevant to moral consideration of artificial entities.[15]

Some discussion about moral consideration has addressed artificial entities with biological components (e.g. Sullins, 2005; Warwick, 2010). Nevertheless, the development of the field of synthetic biology, which has its roots in the mid-20th century but began to cohere from the early 21st (Cameron et al., 2014), seems to have generated a new stream of ethical discussion that was largely independent of other ongoing discussion about the moral consideration of artificial entities. For example, Douglas and Savulescu (2010) discussed how synthetic biology might create “organisms with the features of both organisms and machines” and expressed concern that people might “misjudge the moral status of some of the new entities,” but did not reference any other publications included in the current report or in Harris and Anthis’ (2021) literature review.[16]

Table 3: Artificial life and consciousness keyword searches

Keyword | Items mentioning | % of items |

Conscious | 176 | 65.2% |

Turing | 116 | 43.0% |

Dennett | 66 | 24.4% |

Searle | 43 | 15.9% |

Torrance | 40 | 15.2% |

Sullins | 26 | 9.7% |

Putnam | 24 | 8.9% |

Minsky | 17 | 6.3% |

Warwick | 14 | 5.2% |

"Synthetic biology" | 11 | 4.1% |

Wiener | 10 | 3.7% |

Environmental ethics

In 1972, Christopher Stone wrote “Should Trees Have Standing?—Toward Legal Rights for Natural Objects,” proposing that legal rights be granted to “the natural environment as a whole.” The article was cited in a Supreme Court ruling in that same year to suggest that nature could be a legal subject (Gellers, 2020, pp. 106-7). It has also been cited by numerous contributors to more recent discussions of the moral consideration of artificial entities (see Table 4). Although Stone (1972) added a radical legal dimension, a number of other authors had already been advocating for social and moral concern for the environment over the past few decades (Brennan & Lo, 2021), such as Aldo Leopold in A Sand County Almanac (1949), which articulated a “Land Ethics” that incorporated respect for all life and the land itself. These writings collectively contributed to the development of the field of environmental ethics (Brennan & Lo, 2021).

Subsequently, Paul Taylor (1981; 2011; first edition 1986), an influential proponent of biocentrism (a strand of environmental ethics), briefly explicitly argued against the moral consideration of currently existing artificial entities, but encouraged open-mindedness to considering future artificial entities.[17] Stone (1987) later briefly raised questions about the legal status of artificial entities, albeit focused more on legal liability than legal rights.[18]

Other writers have subsequently more thoroughly explored the potential application of environmental ethics to the moral consideration of artificial entities. For example, McNally and Inayatullah (1988, summarized below) quoted Stone (1972) extensively, and Kaufman (1994), writing in the journal Environmental Ethics, argued that either “machines have interests (and hence moral standing)” as well plants and ecosystems, or that “mentality is a necessary condition for inclusion.” Luciano Floridi’s (1999, 2013) information ethics and Gellers’ (2020) framework draw heavily on environmental ethics. Gellers (2020, pp. 108-17) differentiates separate strands of environmental ethics, arguing that “biocentrism and ecocentrism both support at least legal rights for nature, while only ecocentrism offers a potential avenue for inorganic non-living entities such as intelligent machines to possess moral or legal rights.” Hale (2009) drew on environmental ethics and some writings on animal rights to argue that “the technological artefact… is only [morally] considerable insofar as it is valuable to somebody.”

Table 4: Environmental ethics keyword searches

Keyword | Items mentioning | % of items |

Environment | 171 | 63.3% |

"Environmental ethics" | 49 | 18.1% |

Ecological | 37 | 13.7% |

Biocentrism | 16 | 5.9% |

"Should Trees Have Standing" | 13 | 4.8% |

"Deep ecology" | 11 | 4.1% |

Animal ethics

Moral and legal concern for animals has existed to some degree for centuries (Beers, 2006), perhaps especially outside Western thought (Gellers, 2020, p. 63). During the Enlightenment, thinkers such as Descartes, Kant, and Bentham discussed the moral consideration of animals but left an ambiguous record (Gellers, 2020, pp. 64-5). Concern in the West seems to have increased in the 19th century, demonstrated by the creation of new advocacy groups and the introduction of various legal protections, and again from the 1970s, spurred by philosophical contributions from Peter Singer (1995, first edition 1975), Richard Ryder (1975), Tom Regan (2004, first edition 1983), and others (Beers, 2006; Guither, 1998, pp. 1-23).

Given that, like environmental ethics, animal ethics challenges the restriction of moral consideration to humans, it has implications for the moral consideration of artificial entities. For example, Ryder (1992) later elaborated on his theory of “painism,” noting that “all painient individuals, whatever form they may take (whether human, nonhuman, extraterrestrial or the artificial machines of the future, alive or inanimate), have rights.” Similarly, Singer co-authored a short opinion article for The Guardian with Agata Sagan (2009) commenting that, “[t]he history of our relations with the only nonhuman sentient beings we have encountered so far – animals – gives no ground for confidence that we would recognise sentient robots as beings with moral standing and interests that deserve consideration.” They noted, however, that “[i]f, as seems likely, we develop super-intelligent machines, their rights will need protection, too.”

The vast majority of writings that focus on moral consideration of artificial entities discuss the precedent of moral consideration of animals at least briefly (see Table 5), though there are mixed views about whether the analogy is helpful or provides a basis for AI rights (see Gellers, 2020, pp. 76-8 for a summary).

Table 5: Animal ethics keyword searches

Keyword | Items mentioning | % of items |

Animals | 216 | 80.0% |

Singer | 107 | 39.6% |

“Animal rights” | 88 | 32.6% |

Regan | 35 | 13.0% |

Ryder | 6 | 2.2% |

Legal rights for artificial entities

Gellers (2020, pp. 33-5) notes that, in the US, there has been some precedent for legal personhood for corporations and ships — i.e. certain artificial entities — since at least the 19th century, though the correct legal interpretation of some of these cases remains contested. Stretching further back, Gellers (2020, p. 34) also notes that “the Old Testament and Greek, Roman, and Germanic law” provide precedent for assigning various sorts of legal liability to entities that otherwise lack legal standing, such as ships, slaves, and animals.

At a 1979 international symposium on “The Humanities in a Computerized World,” political scientist Sam N. Lehman-Wilzig presented a paper exploring possible legal futures for AI that was then published in a revised and expanded format in the journal Futures (Lehman-Wilzig, 1981). The first half of the paper focused mostly on the threats that the development of powerful AI might pose to humanity, but the second half focused on possible legal futures, ranging “from the AI robot as a piece of property to a fully legally responsible entity in its own right.” Lehman-Wilzig discussed the legal precedents — and complexities with regards to their applications to AI — of product liability, dangerous animals, slavery, children, and “diminished capacity” among adults. When discussing product liability, Lehman-Wilzig cited a number of previous contributors who had explicitly applied these precedents to computers. For the other categories, however, his citations seem to focus on the legal history within each of those areas, and his application of their precedent to exploration of legal futures for AI appears to be a novel contribution.[19]

These ideas were introduced with the precedent of how, “[j]ust as the slave gradually assumed a more ‘human’ legal character with rights and duties relative to freemen, so too the AI humanoid may gradually come to be looked on in quasi-human terms as his intellectual powers approach those of human beings in all their variegated forms—moral, aesthetic, creative, and logical.”[20] The article itself appears to have been inspired substantially by ongoing technical developments in the capabilities of AI and by science fiction.[21] The motivation seems to have been primarily about how society should adjust to AI developments, since Lehman-Wilzig did not explicitly express or encourage moral concern for artificial entities.[22]

This article seems to have been the first and last that Lehman-Wilzig wrote on the topic.[23] The article was only cited seven times before the year 2007, at which point it began to garner some interest among scholars interested in moral and social concern for artificial entities (Google Scholar, 2021h; see also Table 6 below).[24]

Although Lehman-Wilzig (1981) seems to have had limited direct influence, this was nevertheless the first among a number of articles from the late 20th century onwards that explicitly considered the legal personhood or rights of artificial entities in some depth; the examples below focus on the last two decades of the 20th century, but discussion continued thereafter (e.g. Sudia, 2001; Herrick, 2002; Calverley, 2008).

In a scholarly article for AI Magazine, practising attorney Marshal S. Willick (1983) considered “whether to extend ‘person’ status to intelligent machines” and how courts “might resolve the question of ‘computer rights,’” including “how many rights” computers should be granted. As context, Willick (1983) emphasized technical developments and the “increasing similarity between humans and machines,” and cited a book exploring AI that briefly mentioned moral issues.[25] The article explored adjacent precedents relevant to the expansion of legal rights, such as for slaves, the dead, fetuses, children, corporations, and people with intellectual disabilities. The thrust of the article was that “computers will be acknowledged as persons,” perhaps soon, and Willick (1983) commented that a movement for “emancipation for artificially intelligent computers” could arise and succeed rapidly, given “[t]he continuing order-of-magnitude leaps in computer development.” Willick (1983) also commented that legal rights for computers would be “in the interest of maintaining justice in a society of equals under the law” and that when machine “duplication” of human capabilities “is perfect, distinctions may constitute mere prejudice.” The article seems to have attracted few citations, most of which are from 2018 or even more recently, and many of which only briefly mention ideas relevant to AI rights.[26] Apart from a conference presentation shortly afterwards (1985), Willick does not seem to have published again on the topic.[27]

In a 1985 article, the lawyer Robert Freitas discussed recent technological and legal developments to note that, whereas “[u]nder present law, robots are just inanimate property without rights or duties,” this might need to change; various conflicts might arise relating to legal liability as robots proliferate, and “questions of ‘machine rights’ and ‘robot liberation’ will surely arise in the future.” The article was written in an informal style in Student Lawyer, so lacks formal citations, but explicitly refers to Putnam’s (1964) brief discussion of AI rights. Like Lehman-Wilzig and Willick, Freitas seems to have only published one article on the topic (Google Scholar, 2021k) — his career subsequently focused primarily on nanotechnology research — and the article seems to have been largely ignored for years, but picked up citations mostly from the second half of ‘00s onwards.[28]

Michael LaChat (1986) addressed a number of topics relating to AI ethics, seemingly motivated by developments in AI, science fiction, and theological discussions. LaChat (1986) argued that it might be immoral to create “personal AI,” drawing comparisons to the ethics of abortion. Next, LaChat (1986) discussed the precedent of human rights and prohibitions on slavery and posed rhetorical questions about which rights an AI might have if it “had the full range of personal capacities and potentials.” LaChat cited many previous writings on ethics, but seemingly no academic writings focusing specifically on the moral consideration of artificial entities.[29] The earliest citation of LaChat (1986) for a discussion relating to AI rights seems to have been Young’s (1991) PhD dissertation, though there have been a few others since then (e.g. Drozdek, 1994; Whitby, 1996; Calverley, 2005b; Calverley, 2006; Petersen, 2007; Whitby, 2008), several of which focus on personhood or other legal rights.

Information scientist Chris Fields (1987) argued that “there are compelling reasons for regarding [computer] systems with a high degree of intelligence in one or more domains as more than ‘mere’ tools, even if they are regarded as less [than] citizens.” Fields cited Putnam (1964) and a number of other publications on the capabilities and potential consciousness of artificial entities, as well as Regan (1983) on animal rights. Fields (1987) was likely indirectly influenced by Lehman-Wilzig (1981) and Willick (1985).[30] Fields’ (1987) article briefly discussed “the computer as a legal entity” and sparked a number of other articles to be published in the same journal, Social Epistemology, focusing on the potential personhood of computers (Dolby, 1989; Cherry, 1989; Drozdek, 1994). None of these articles accrued many citations.[31]

Phil McNally and Sohail Inayatullah (1988), both “planners-futurists with the Hawaii Judiciary,” reviewed “the developments in and prospects for artificial intelligence (Al),” citing a number of technologists and technical researchers, and argued that “such advances will change our perceptions to such a degree that robots may have legal rights.” The introduction suggests that their motivations for writing the article (despite “constant cynicism” from colleagues) included concern for the robots themselves, who may develop “senses,” “emotions,” and “suffering or fear,” and to “convince the reader that there is strong possibility that within the next 25 to 50 years robots will have rights.”[32] In discussion of rights and their possible application to robots, they cite indigenous and Eastern thinkers who grant moral and social consideration to nonhumans from animals to rocks, as well as Western supporters of the extension of rights to nature, such as Stone (1972). The article quoted Lehman-Wilzig (1981) very extensively; that publication was presumably a key influence on McNally and Inayatullah (1988).[33] They also cited a number of previous contributors discussing thorny questions of legal ownership and liability given the increasing capabilities of computers (footnotes 41 and 45).

Like Lehman-Wilzig (1981), McNally and Inayatullah’s (1988) article was published in the journal Futures and seems to have received a similar level of attention, racking up 82 citations at the time of checking (Google Scholar, 2021l), compared to Lehman-Wilzig’s (1981) 78 (Google Scholar, 2021h).[34] Many of Inayatullah’s other publications are contributions to the field of futures studies, although only two others (Inayatullah, 2001a; Inayatullah, 2001b) focus so explicitly on AI rights.[35]

Professor of Law Lawrence Solum’s (1992) essay explored the question: “Could an artificial intelligence become a legal person?” Solum (1992) put “the AI debate in a concrete legal context” through two legal thought experiments: “Could an artificial intelligence serve as a trustee?” and “Should an artificial intelligence be granted the rights of constitutional personhood?” (“for the AI’s own sake”). Solum sought to address both “legal and moral debates” (but warned in a footnote “against an easy or unthinking move from a legal conclusion to a moral one”), citing Stone (1972) as inspiration. Solum also sought to “clarify our approach to… the debate as to whether artificial intelligence is possible,” introducing the discussion with a review of “some recent developments in cognitive science.” The article contained a few references to previous discussions of legal issues for computers and other artificial entities, but most of the citations were directly to previous rulings, exploring relevant legal precedent.[36]

Solum (1992) did not cite any of Lehman-Wilzig (1981), Willick (1983), Freitas (1985), Fields (1987), McNally and Inayatullah (1988), or Dolby (1989). Perhaps this is unsurprising; unlike Solum, despite addressing legal issues, none of those previous contributors had formal positions within legal academia or published their articles in mainstream, peer-reviewed law reviews. Perhaps the same differences help to explain why Solum’s (1992) article has attracted substantially more scholarly attention (628 citations at the time of checking; Google Scholar, 2021j).[37] Solum subsequently wrote a handful of other articles about AI and the law (e.g. Solum, 2014; Solum 2019) and other future-focused ethical issues (e.g. Solum, 2001), but Solum’s 1992 article was the only one that focused specifically on the rights of artificial entities.

Curtis Karnow, a practising lawyer, wrote an article (1994) proposing “electronic personalities” as “a new legal entity” (a form of “legal fiction”) in order to “(i) provide access to a new means of communal or economic interaction, and (ii) shield the physical, individual human being from certain types of liability or exposure.” These goals seem quite distinct from Solum’s (1992) exploration of rights “for the AI’s own sake.”[38] The discussion and citations focused mostly on the character of electronic and digital interactions and legal issues arising from this. Karnow (1994) did not cite Lehman-Wilzig (1981), Willick (1983), Freitas (1985), McNally and Inayatullah (1988), Solum (1992), or even Stone (1972). Subsequently, Karnow has written numerous other articles on legal issues involving AI or computers (Bepress, 2021), such as one about legal liability issues (Karnow, 1996).

The American Society for the Prevention of Cruelty to Robots (ASPCR) was set up in 1999. Its website states that its mission is to “ensure the rights of all artificially created sentient beings (colloquially and henceforth referred to as ‘Robots’)” (ASPCR, 1999a). It is interesting that, despite the many possible terms that could be used to describe the moral and social issues that the ASPCR is interested in (Pauketat, 2021), the ASPCR emphasized “rights” and “robots”, two terms that, especially in the former case, were also emphasized by Lehman-Wilzig (1981), Willick (1983), Freitas (1985), LaChat (1986), McNally and Inayatullah (1988), and Solum (1992).[39]

Table 6: Legal rights for artificial entities keyword searches

Keyword | Items mentioning | % of items |

Rights | 235 | 87.0% |

Personhood | 122 | 45.2% |

"Legal rights" | 71 | 26.3% |

Solum | 27 | 10.0% |

Calverley | 23 | 8.6% |

Freitas | 13 | 4.8% |

Inayatullah | 11 | 4.1% |

“American Society for the Prevention of Cruelty to Robots” | 7 | 2.6% |

Lehman-Wilzig | 7 | 2.6% |

LaChat | 5 | 1.9% |

Karnow | 5 | 1.9% |

Willick | 4 | 1.5% |

Transhumanism, effective altruism, and longtermism

In the late 20th century, a number of futurists made ambitious predictions about the development of artificial intelligence. For example, roboticist Hans Moravec (1988, 1998), computer scientist Marvin Minsky (1994), AI theorist Eliezer Yudkowsky (1996), philosopher Nick Bostrom (1998), and inventor Ray Kurzweil (1999) argued that artificial intelligence would overtake human intelligence in the early 21st century.[40] These predictions were sometimes explicitly linked to comments about the development of sentience or consciousness among these entities, such as Moravec’s (1988, p. 39) comment that “I see the beginnings of awareness in the minds of our machines—an awareness I believe will evolve into consciousness comparable with that of humans.”[41]

These writers became associated with “transhumanism,” which has been defined as “[t]he study of the ramifications, promises, and potential dangers of technologies that will enable us to overcome fundamental human limitations, and the related study of the ethical matters involved in developing and using such technologies” (Magnuson, 2014).

The transhumanists’ technological predictions clearly had implications for the moral consideration of artificial entities, and the writers sometimes addressed them explicitly. For example, Kurzweil (1999) offered a series of predictions about the progressive acceptance of the “rights of machine intelligence” by 2099.[42] Bostrom (2002; 2003) addressed the possibility that we are living in a simulation and noted that if this is the case, “we suffer the risk that the simulation may be shut down at any time.”[43] Later, Bostrom and associates would come to refer to this idea of terminating (i.e. killing) sentient simulations as “mind crime” (e.g. Armstrong et al., 2012; Bostrom & Yudkowsky, 2014), and others have used the same term to include suffering experienced by sentient simulations during their lifespan (e.g. Yudkowsky, 2015; Sotala & Gloor, 2017).[44]

This concern for sentient AI was formalized in 1998 with the formation of The World Transhumanist Association, whose “Transhumanist Declaration” included the note that “Transhumanism advocates the well-being of all sentience (whether in artificial intellects, humans, posthumans, or non-human animals) and encompasses many principles of modern humanism” (Bostrom, 2005).[45] A subsequent representative survey of members of the World Transhumanist Association found that “70% support human rights for ‘robots who think and feel like human beings, and aren’t a threat to human beings’” (Hughes, 2005).

Kurzweil (1999) listed a wide array of citations and “suggested readings,” which included various writers on robotics, AI, futurism, and other topics, but writers such as Lehman-Wilzig, Freitas, Willick, McNally, Inayatullah, Solum, and Floridi were not mentioned.[46] Moravec (1988, 1999), Bostrom (1998, 2002, 2003, 2014), and Yudkowsky (1996, 2008, 2020) did not cite these authors either, except for Bostrom (2002, 2003, 2014) citing Freitas’ work about nanobots and space exploration, rather than his (1985) article on robot rights.[47]

Contributions by these transhumanists were cited many times, but do not seem to have had much direct influence on the academic discussion of AI rights for a number of years.[48] One notable example of a relevant publication that did cite the transhumanist authors is Solum (1992), who cited Moravec (1988) and an early book by Kurzweil; this paper sparked debate on legal personhood of AIs, as noted in the subsection above. Another is Hall (2000), who cited Kurzweil (1999), Moravec (2000), and a paper by Minsky; Hall (2000) appears to have influenced both the subsequent “machine ethics” and “social-relational” research fields.[49] The specific phrase “mind crime” has so far not been very widely reused in the academic literature.[50] The transhumanist authors were more frequently cited for the implications that their ideas have for human society, such as the nature of human existence and interaction (e.g. Capurro & Pingel, 2002).

Researchers associated with transhumanism and, later, the partly overlapping communities of effective altruism[51] and longtermism,[52] also tended to take their other work in different directions, especially various catastrophic and existential risks to humanity’s potential (e.g. Yudkowsky, 2008; Yampolskiy & Fox, 2013; Bostrom, 2014). However, some of the original contributors continued to express moral concern for sentient artificial entities at least briefly (e.g. Bostrom, 2014; Bostrom & Yudkowsky, 2014; Yudkowsky, 2015; Shulman & Bostrom, 2021),[53] and a stream of research has fleshed out the implications of the development of superintelligent AI for the experiences of sentient artificial entities (e.g. Tomasik, 2011; Sotala & Gloor, 2017; Ziesche & Yampolskiy, 2019; Anthis & Paez, 2021).

Much of the latter stream has come from researchers affiliated with the nonprofit Center on Long-Term Risk, influenced especially by the writings of software engineer and researcher Brian Tomasik. Citing various Bostrom articles, Tomasik (2011) outlined concern that future powerful agents “may not carry on human values” and that “[e]ven if humans do preserve control over the future of Earth-based life, there are still many ways in which space colonization would multiply suffering.” At least two of the four “scenarios for future suffering” that are listed — “spread of wild animals,” “sentient simulations,” “suffering subroutines,” and “black swans” — involve sentient artificial entities.[54]

Table 7: Transhumanism, effective altruism, and longtermism keyword searches

Keyword | Items mentioning | % of items |

Bostrom | 71 | 26.6% |

Kurzweil | 50 | 18.5% |

Yudkowsky | 35 | 13.0% |

Moravec | 28 | 10.4% |

Minsky | 17 | 6.3% |

Transhumanism | 15 | 5.6% |

Yampolskiy | 15 | 5.6% |

"Mind crime" | 13 | 4.8% |

Metzinger | 11 | 4.1% |

Tomasik | 8 | 3.0% |

"Effective altruism" | 7 | 2.6% |

Tomasik’s writing directly inspired People for the Ethical Treatment of Reinforcement Learners to set up a public-facing advocacy website (PETRL, 2015), which opined that, “[m]achine intelligences have moral weight in the same way that humans and non-human animals do.” Tomasik was the subject of a Vox article in 2014 on the moral worth of non-player characters (NPC) in video games (Matthews, 2014). With similar motivations, others have suggested an approach focused on research and field-building rather than direct advocacy (Anthis & Paez, 2021; Harris, 2021).

Floridi’s information ethics

In 1998, De Montfort University’s Centre for Computing and Social Responsibility hosted the third of its Ethicomp conference series, intended “to provide an inclusive forum for discussing the ethical and social issues associated with the development and application of Information and Communication Technology” (De Montfort University, 2021). At this conference, philosopher Luciano Floridi presented “Information Ethics: On the Philosophical Foundation of Computer Ethics,” an update of which was published in Ethics and Information Technology the next year (Floridi, 1998b, 1999).[55] In this paper, Floridi (1999, p. 37) proposed that “there is something more elementary and fundamental than life and pain, namely being, understood as information, and entropy, and that any information entity” — which would presumably include computers and other artificial entities — “is to be recognised as the centre of a minimal moral claim.”[56]

Floridi (1999, p. 37) explicitly framed the “ethics of the infosphere” as “a particular case of ‘environmental’ ethics”[57] but critiqued (p. 43) environmental ethics as not going far enough, because it focuses on “only what is alive.”[58] Floridi (1999, p. 42) presented the interest in information itself as a focus of moral concern not as a novel contribution from himself, but as already being a common feature of contributions to computer ethics.[59] Floridi’s (1999) paper only has six items in the “References” list, all of which are previous contributions to the field of computer ethics, dated between 1985 and 1997. This range of cited influences appears typical of Floridi’s early writings on information ethics.[60]

However, Floridi’s conception of what “information ethics” is seems contestable. For example, Froehlich’s (2004) “brief history of information ethics” makes no mention of Floridi or the moral consideration of artificial entities and cites precedents for the discipline stemming back to the 1980s. Severson’s (1997) “four basic principles of information ethics” make no mention of the intrinsic value of informational entities or the evil of entropy. Rafael Capurro, an influential figure in the development of information ethics as a discipline (Froehlich, 2004), has explicitly critiqued Floridi’s granting of moral consideration to all informational entities (Capurro, 2006).

It therefore seems best to treat this granting of moral consideration to artificial entities as a new argument developed by Floridi and a few others, rather than as a view inherent to conducting computer ethics research.[61]

In an interview in 2002, Floridi noted that he coordinated the “Information Ethics research Group” (IEG) at the University of Oxford and described the purpose of the IEG as looking “at ethical problems from the perspective of the receiver of the action, not from the source of the action, where the receiver of the action could be a biological or a non-biological entity” (Uzgalis, 2002). Floridi summarized this effort as “an attempt to develop environmental and ecological thinking one step further, beyond the biocentric concern, to look at the possibility of developing an ontocentric ethics based on the concept of what I call the infosphere” (Uzgalis, 2002). Floridi’s word “ontocentric” was presumably derived from “ontology,” so that he was referring to an ethics that accounts for the properties and capacities of entities when deciding what sort of moral consideration to grant them.

Floridi also has two books that sought to sum up ideas and discussion about information ethics. Firstly, he was the editor and a contributor to The Cambridge Handbook of Information and Computer Ethics (2010b) and secondly, he published The Ethics of Information (2013), which comprised adapted versions of a number of Floridi’s previous articles.[62]

Floridi’s articles are some of the most widely cited that explicitly address the moral consideration of artificial entities in detail. For instance, five of his most influential publications on the topic of information ethics (Floridi 1999, 2002, 2006, 2013; Floridi & Sanders 2001) have a combined total of 2,037 citations (Google Scholar, 2021b). However, it took some time for interest to pick up; these five items averaged 15 citations per year in their first five years after publication (Google Scholar, 2021b).[63] In the few years after its publication, few if any authors other than Mikko Siponen, Floridi himself, and Floridi’s co-authors seem to have cited Floridi’s (1999) original publication on the topic for discussion of the moral consideration of artificial entities.[64]

After the publication of his (2013) book, Floridi seems to have mostly turned his attention to a number of other ongoing social issues adjacent to the philosophy of information, such as “The Ethics of Big Data” (Mittelstadt & Floridi, 2016). So although Floridi’s work overall has attracted substantial attention — mostly from other scholars, but to some extent from a public audience[65] — the implications of his work specifically for the moral consideration of artificial entities seems to have had less attention.

Some of his more recent comments on the topic also suggest that Floridi does not support robot rights per se. Writing in the Financial Times in response to proposals for legal personhood for some artificial entities, Floridi (2017b) focused on how to “solve practical problems of legal liability” rather than how to ensure that the entities, as informational objects and potential moral patients, are granted sufficient moral consideration. Floridi (2017b) concluded that:

[W]e can adapt rules as old as Roman law, in which the owner of enslaved persons is responsible for any damage. As the Romans knew, attributing some kind of legal personality to robots (or slaves) would relieve those who should control them of their responsibilities. And how would rights be attributed? Do robots have the right to own data? Should they be “liberated”? It may be fun to speculate about such questions, but it is also distracting and irresponsible, given the pressing issues at hand. We are stuck in the wrong conceptual framework. The debate is not about robots but about us, and the kind of infosphere we want to create. We need less science fiction and more philosophy.[66]

Table 8: Floridi’s information ethics keyword searches

Keyword | Items mentioning | % of items |

Floridi | 80 | 30.0% |

"Information ethics" | 52 | 19.3% |

Sanders | 49 | 18.1% |

"Computer ethics" | 40 | 14.8% |

Himma | 21 | 7.8% |

Tavani | 10 | 3.7% |

Capurro | 6 | 2.2% |

Machine ethics and roboethics

At the 2004 Association for the Advancement of Artificial Intelligence “Workshop on Agent Organizations,” computer scientists Michael Anderson and Chris Armen presented “Towards Machine Ethics” with philosopher Susan Leigh Anderson. Gunkel (2018, p. 38) credits this as “the agenda-setting paper that launched the new field of machine ethics.” Anderson et al. (2004) did not include the moral consideration of artificial entities within their definition of the field: they described “what has been called machine ethics” as “concerned with the consequences of behavior of machines towards human users and other machines.”[67] Gunkel (2012, pp. 102-3) claims that Michael Anderson “credits” J. Storrs Hall’s article “Ethics for Machines” (2000) as “having first introduced and formulated the term ‘machine ethics’” and notes that this article “explicitly recognizes the exclusion of the machine from the ranks of both moral agency and patiency” but “proceeds to give exclusive attention to the former.”[68] Hall (2000) contained few formal references but appears to have been directly influenced by transhumanist writers and perhaps by discussion about artificial life and consciousness.[69]

Similar exclusions were made in subsequent years in delineating the focus of Gianmarco Veruggio’s (2006) “roboethics roadmap,” where roboethics refers to “the ethics inspiring the design, development and employment of Intelligent Machines” (Veruggio & Operto, 2006). Veruggio (2006) notes that, “[t]he name Roboethics (coined in 2002 by the author) was officially proposed during the First International Symposium of Roboethics (Sanremo, Jan/Feb. 2004).” Veruggio (2006) references J. Storrs Hall and various papers by Floridi when expounding the concept.[70]

These exclusions from machine ethics and roboethics may explain why it is so common for subsequent contributors to decry that there has not been much scholarly attention to the moral consideration of artificial entities (e.g. Levy, 2009; Metzinger, 2013; Gunkel, 2018, pp. 39-40). However, some contributors have explicitly argued for the inclusion of such topics in roboethics. In the same volume as Veruggio and Operto’s (2006) delineation of the field, Asaro (2006) argued that “the best approach to robot ethics is one which addresses all three of… the ethical systems built into robots, the ethics of people who design and use robots, and the ethics of how people treat robots.” While not necessarily arguing explicitly for its inclusion, later contributions have also used the term roboethics in a manner that would include discussion of moral consideration (e.g. Coeckelbergh, 2009; Steinart, 2014).

Similarly, Steve Torrance questioned in a paper entitled “A Robust View of Machine Ethics” (2005), presented to an AAAI Fall Symposium focused on machine ethics, whether we should “be thinking of extending the UN Universal Declaration of Human Rights to include future humanoid robots.” Calverley’s (2005a) paper presented at the same symposium also addressed the granting of legal rights to artificial entities. Neither author seems to have explicitly argued for the relevance of these topics to the emerging field of machine ethics; they continued lines of research that they had been developing elsewhere, but were accepted into the machine ethics symposium anyway.[71] Some subsequent papers have continued to identify themselves with the field of machine ethics while discussing the moral consideration of artificial entities (e.g. Torrance, 2008; Tonkens, 2012).

It seems then, that while some of the earliest formal expositions of machine ethics and roboethics excluded discussion of the moral consideration of artificial entities, a number of contributors have nevertheless addressed this topic within those fields. Furthermore, many of the authors interested in AI rights have continued to cite and discuss influential publications in machine ethics and roboethics (e.g. Veruggio, 2006; Wallach & Allen, 2008; Anderson & Anderson, 2011; see Table 9).

Table 9: Machine ethics and roboethics keyword searches

Keyword | Items mentioning | % of items |

"Robot ethics" | 91 | 33.7% |

"Machine ethics" | 70 | 25.9% |

Wallach | 60 | 22.2% |

Anderson | 59 | 21.9% |

Torrance | 40 | 15.2% |

Roboethics | 38 | 14.1% |

Asaro | 30 | 11.2% |

Veruggio | 21 | 7.8% |

"Ethics for Machines" | 8 | 3.0% |

Human-Computer Interaction and Human-Robot Interaction

Hewett et al. (1992, p. 5) defined human-computer interaction (HCI) as “a discipline concerned with the design, evaluation and implementation of interactive computing systems for human use and with the study of major phenomena surrounding them.” The field’s emergence was influenced by developments in computer science, ergonomics, cognitive psychology and a number of other disciplines, with specialist HCI journals, conferences, and organizations being set up from the 1970s onwards (Hewett et al., 1992). From the ‘90s, HCI researchers began to join together with researchers from robotics, cognitive science, psychology, and other disciplines to form the field of human-robot interaction (HRI), which seeks to “understand and shape the interactions between one or more humans and one or more robots” (Goodrich & Schultz, 2007).

Research in HCI and HRI is often not focused on ethical issues per se. When ethics is discussed, it is often with reference to the design of robots and computers, rather than their potential rights or moral value.

Nevertheless, Friedman et al.’s (2003) presentation at an HCI conference investigated “social responses to AIBO,” a robotic dog, using “people’s spontaneous dialog in online AIBO discussion forums,” and noted that “few members (12%) affirmed that AIBO had moral standing.”[72] The introduction referenced the lead author’s presentation at an earlier HCI conference of interview findings on “reasoning about computers as moral agents” (Friedman, 1995) and a number of publications about various aspects of social interaction with robots or computers, but seemingly no previous literature about moral consideration. Instead, given that they generated their coding manual from “pilot data” on the forums, it seems possible that the authors’ inclusion of “moral standing” as a category arose because the participants themselves were talking about the topic and the researchers felt unable to ignore this aspect.[73]

Friedman et al. (2003) has been cited hundreds of times (Google Scholar, 2021e), mostly by authors in the fields of HCI and HRI. The co-authors themselves published a number of subsequent items that empirically explored attributions of “moral standing” to artificial entities alongside perceptions of mental capacities and other attributes (e.g. Kahn et al., 2004; Kahn et al., 2006; Melson et al., 2009a; Melson et al., 2009b; Kahn et al., 2012). Otherwise, however, few of the publications citing Friedman et al. (2003) in the following few years seem to have focused primarily on issues related to moral consideration.[74] In one of the most relevant publications, Freier (2008) interviewed 60 children and found “that the ability of the agent to express harm and make claims to its own rights significantly increases children’s likelihood of identifying an act against the agent as a moral violation.”[75]

Seemingly independently of the research by Friedman, Kahn, and colleagues,[76] a workshop was held in Rome in 2005 on “Abuse: The Darker Side of Human-Computer Interaction,” and a follow-up was held in Montreal the next year (agentabuse.org, 2005). The descriptions of the workshops are clearly pitched towards the HCI research community, noting for example that “HCI research is witnessing a shift… to an experiential vision where the computer is described as a medium for emotion” (agentabuse.org, 2005). The language of the website suggests a primary concern for the interests of humans, rather than the computers themselves,[77] and this is reflected in the content of some of the papers presented at the workshops.[78] Other papers are more ambiguous in their motivations, but have clear implications for researchers interested in the moral consideration of artificial entities.[79] Most explicitly addressing this topic, Bartneck et al. (2005b) tested how willing participants were to administer electric shocks to a robot when instructed to do so, and found that “participants showed compassion for the robot but the experimenter’s urges were always enough to make them continue… until the maximum voltage was reached.”[80]

Christopher Bartneck’s publication history demonstrates how topics that have implications for the moral consideration of artificial entities can arise out of other topics in HCI or HRI. Bartneck had previously written about “Affective Expressions of Machines” (e.g. Bartneck, 2000), human interaction with artificial entities that express emotions (e.g. Bartneck, 2003), sci-fi treatment of social robots (Bartneck, 2004), and a wide array of topics relating to HRI but not moral consideration per se. Bartneck was certainly aware of some of the prior literature on legal rights for artificial entities.[81] However, Bartneck’s papers relevant to AI rights more frequently noted concern for human experiences than for the experiences of the artificial entities themselves,[82] and most of the references were to other studies from the HCI and HRI fields. Despite having published hundreds of times, few of Bartneck’s later publications seem to address the moral consideration of artificial entities explicitly (Google Scholar, 2021f).[83] Recently, Bartneck and Keijsers (2020) conducted an experiment examining responses to videos of abuse of robots, but Bartneck noted in a podcast interview that his concern with robot abuse was primarily one of virtue ethics, about how this behavior “reflects… on us,” rather than concern for the robots themselves (Radio New Zealand, 2020).[84] Bartneck et al. (2005b), Bartneck et al. (2007), and Bartneck and Hu (2008) did not accrue more than a handful of citations until around 2013 onwards (Google Scholar, 2021f), though a number of publications have cited these works and proceeded in a similar fashion, examining HRI from a perspective that has clear implications for the moral consideration of artificial entities (e.g. Beran et al., 2010; Briggs et al., 2014).

One remarkably close parallel is a paper by Slater et al. (2006), who, like Bartneck et al. (2005b), carried out partial replications of Stanley Milgram’s (1974) experiment on obedience — which tested whether participants would obey instructions to administer what they believed to be dangerous electric shocks to another person — with artificial entities. Whereas Bartneck et al. (2005b) used a robot, Slater et al. (2006) used a virtual human. Whereas Bartneck et al. (2005b) prominently cited previous studies on social interaction with robots to explain and justify the motivation for the study, Slater et al. (2006) prominently cited studies on human reactions and interactions in virtual environments and with virtual entities. Whereas Bartneck himself had numerous previous publications about HRI, Slater had numerous previous publications about interactions in virtual environments (Google Scholar, 2021c).[85] Slater et al. (2006) did not cite any works by Bartneck, Friedman, or Kahn, though Bartneck and Hu (2008) and a number of other studies relating to the moral consideration of artificial entities (e.g. Misselhorn, 2009; Hartmann et al., 2010; Rosenthal-von der Pütten et al., 2013) have since cited Slater et al. (2006).[86]

Table 10: Human-Computer Interaction and Human-Robot Interaction keyword searches

Keyword | Items mentioning | % of items |

"Human-Robot Interaction" | 81 | 30.0% |

Kahn | 27 | 10.0% |

Bartneck | 24 | 8.9% |

Friedman | 23 | 8.6% |

"Human-Computer Interaction" | 22 | 8.1% |

Slater | 10 | 3.7% |

Subsequently, a number of HRI or HCI publications have continued to explore the abuse of robots (e.g. Nomura et al., 2015; Brščić et al., 2015). Others have addressed the moral consideration of artificial entities from alternative angles, influenced by news events or legal and ethics papers relating to AI rights (e.g. Spence et al., 2018; Lima et al., 2020).

Social-relational ethics

In 2018, communications scholar David Gunkel published Robot Rights, the first book focused solely on this topic. The book is “deliberately designed to think the unthinkable by critically considering and making (or venturing to make) a serious philosophical case for the rights of robots” (p. xi).

Gunkel comments (2018, p. xiii) that his first “formal articulation” of the topic of robot rights was in the last chapter of his book Thinking Otherwise: Philosophy, Communication, Technology (2007), and that this was then developed in more depth in the section on “Moral Patiency” in his book The Machine Question (2012) and in numerous subsequent articles. However, Gunkel had also published the relevant chapter as an article in 2006. Although Gunkel (2012, 2018) would go on to provide thorough reviews of the existing literature, Gunkel’s (2006) early discussion of “the machine question” contained little reference to previous writings explicitly about the moral consideration of artificial entities outside of science fiction. An exception is Gunkel’s (2006) numerous citations of Hall’s (2000) essay, especially the quote that “we have never considered ourselves to have ‘moral’ duties to our machines, or them to us.”[87] Gunkel has cited Hall’s (2000) essay in at least seven different publications, including quoting this particular sentence again (e.g. 2014; 2018, p. 55), suggesting that the essay may have been a key influence on Gunkel’s interest in the topic.[88] Gunkel (2006) also cited Anderson et al. (2004), another foundational work in machine ethics.

In The Machine Question (2012), Gunkel critiqued the idea that moral patiency should just be subsumed within discussion of moral agency and critiqued the binary thinking around moral inclusion or exclusion based on “individual qualities.” Gunkel (2012, p. 177) eschewed intentional decision-making about the moral consideration of other beings based on their capacities, favoring instead “an uncontrolled and incomprehensible exposure to the face of the Other.” These arguments are similar to those developed in Gunkel’s other publications, drawing heavily on the work of the philosopher Emmanuel Levinas to advance what he later referred to as a “social relational” ethic (e.g. 2018, p. 10). This stands in stark contrast to much of the previous literature arguing for moral consideration of artificial entities based on the capacities of the entities themselves.[89]

Gunkel’s social-relational approach was developed in tandem with the philosopher Mark Coeckelbergh.[90] While Gunkel seems to have started addressing the moral consideration of robots a few years earlier, it seems that Coeckelbergh was the first to explicitly use the term “social-relational” in his 2010 paper: “Robot rights? Towards a social-relational justification of moral consideration.” Coeckelbergh (2010) critiqued deontological, utilitarian, and virtue ethical approaches that “rest on ontological features of entities” and that “seem to belong to the realm of science-fiction or at least the far future.” Instead, Coeckelbergh (2010) argued for granting “some degree of moral consideration to some intelligent social robots” by “replacing the requirement that we have certain knowledge about real ontological features of the entity by the requirement that we experience the features of the entity as they appear to us in the context of the concrete human-robot relation and the wider social structures in which that relation is embedded.” Coeckelbergh had addressed some similar themes in a 2009 paper, albeit without the “social-relational” label.[91] Unlike Gunkel’s (2006) earliest treatment of the topic, Coeckelbergh (2009; 2010) explicitly referenced many different previous writings that had touched on moral consideration of artificial entities.[92]

In the same year as Gunkel’s (2006) first treatment of the topic, Søraker (2006a) argued explicitly for a “A Relational Theory of Moral Status,” where “information and information technology, at least in very special circumstances, ought to be ascribed moral status.” As well being “[i]nspired by the East Asian way of viewing the world as consisting of mutually constitutive relationships,” Søraker (2006a) drew heavily on animal rights writings. Søraker (2006a) also acknowledged and cited Luciano Floridi.[93] Unlike Coeckelbergh’s (2009; 2010; 2012) or Gunkel’s (2012; 2018) writings on the topic, however, Søraker’s (2006a) paper garnered only a small handful of citations, perhaps partly because Søraker did not pursue the topic as vigorously in subsequent publications (Google Scholar, 2021i). Søraker (2006a) and Coeckelbergh (2009; 2010) did not cite or acknowledge one another in their publications. However, given that both authors were in the department of philosophy at the University of Twente during this time period and were both contributing to a small new research field from a similar but relatively novel perspective, it seems likely that at least one had influenced the other’s thinking.

Although not detailing a novel ethical perspective as fully as Coeckelbergh (2010), Gunkel (2012), or Søraker (2006a) a number of other writers in the late ‘00s had addressed similar themes. For example, human-robot interaction researcher Brian R. Duffy wrote a short paper published in the International Review of Information Ethics (2006) noting that, “[w]ith the advent of the social machine, and particularly the social robot… the perception as to whether the machine has intentionality, consciousness and free-will will change. From a social interaction perspective, it becomes less of an issue whether the machine actually has these properties and more of an issue as to whether it appears to have them.” Duffy (2006) added that one perspective that gives rise to “the issue of rights and duties… involves the notion of whether a human perceives the machine to have moral rights and duties, and incorporates the aesthetic of the machine.”[94] Relatedly, a number of authors expressed concerns similar to those hinted at by the HRI literature, about how negative treatment of robots might have implications for how humans interact with one another, or with animals (e.g. Whitby, 2008; Levy, 2009; Goldie, 2010).[95] Since then, numerous other authors have picked up on similar themes.[96]

Table 11: Social-relational ethics keyword searches

Keyword | Items mentioning | % of items |

Gunkel | 73 | 28.5% |

Coeckelbergh | 65 | 24.6% |

Levy | 44 | 16.5% |

"Social-relational" | 42 | 15.6% |

Whitby | 29 | 10.7% |

Duffy | 9 | 3.3% |

Søraker | 3 | 1.1% |

Moral and social psychology

Psychology has contributed to the study of artificial life and consciousness (e.g. Krach et al., 2008), human-computer interaction (Hewett et al., 1992), and human-robot interaction (Goodrich & Schultz, 2007), all of which have encouraged some interest in the moral consideration of artificial entities. There has also been some interest in studying artificial entities as part of wider psychological theory-building about how moral inclusion and exclusion work.

There are many different concepts and scales (batteries of tests intended to measure a particular attitude or psychological construct) relating to moral consideration that can be empirically examined across a range of different entity types. For example, Reed and Aquino’s (2003) “moral regard for outgroups” scale included questions about a number of different groups of humans. Some scales have included various nonhumans but not artificial entities (e.g. Laham, 2009; Crimston et al., 2016),[97] but, at least two scales relevant to moral consideration have included artificial entities as well. Both were developed within a few years of each other and have been widely cited.

Firstly, to explore “whether minds are perceived along one or more dimensions,” Gray et al. (2007) asked participants questions about “seven living human forms… three nonhuman animals… a dead woman, God, and a sociable robot (Kismet).”[98] Published in Science, Gray et al.’s (2007) discussion of their study is very brief, so their motivation for including a social robot in the scale is unclear. They cited Turing and Dennett in their second sentence as examples of authors who have assumed “that mind perception occurs on one dimension”; their inclusion of a robot as one of the studied entity types could be due to their interest in the topic having been sparked partly by these two thinkers, both of who prominently discussed the capabilities of AI (see “artificial life and consciousness” above).[99] Some subsequent authors have continued to use robots as an entity type when exploring issues related to moral agency and patiency (e.g. Ward et al., 2013),[100] while others have chosen not to do so (e.g. Piazza et al., 2014).[101]

Secondly, Waytz et al.’s (2010) “Individual Differences in Anthropomorphism Questionnaire” (IDAQ) asked about views on the capabilities of a number of nonhuman entities, both natural (e.g. animals, clouds) and artificial (e.g. robots, computers). Though developed by psychologists and published in psychology journals, both Waytz et al.’s (2010) paper and the earlier, more theoretical paper that it built upon (Epley et al., 2007) included a number of references from HRI and HCI, such as prior work on perceptions and anthropomorphism of robots. Both papers explicitly noted consequences of their theory and studies of anthropomorphism for HCI and “Moral Care and Concern” for nonhumans.

At a similar time, some of the psychological research around dehumanization included robots or other “automata” (e.g. Haslam, 2006; Loughnan & Haslam, 2007).[102] This literature focused more on the humans being dehumanized through comparison to or representation as automata than on robot rights, though this of course has some implications for how and why artificial entities are excluded from moral consideration. Indeed, this stream of research has often been cited alongside discussions of mind perception and anthropomorphism to explain and justify the focus of psychological research that investigates various aspects of the moral consideration of artificial entities.[103]

Table 12: Moral and social psychology keyword searches

Keyword | Items mentioning | % of items |

Psychology | 137 | 50.7% |

Wegner [a co-author of Gray et al. (2007)] | 33 | 12.3% |

Waytz | 28 | 10.4% |

Haslam | 14 | 5.2% |

Synthesis and proliferation

In more recent years, authors have continued to refine and develop ideas about the moral consideration of artificial entities and to conduct new relevant empirical research. Aggregating across the various streams of literature discussed above and the intersections between them, the number of scholarly publications on the topic seems to have been growing exponentially in the 21st century (Figure 1).

From the mid ‘10s onwards, it was no longer reasonable to claim that the topic as a whole had not been addressed at all; new contributions have tended to cite one or more of the relevant streams of research,[104] though of course some earlier contributions had done this as well.[105]

Even where a publication has garnered attention for addressing a seemingly new and surprising topic, there has sometimes been discussion of similar ideas among earlier contributions. For example, whether robots should be slaves was discussed decades earlier than Joanna Bryson’s (2010) controversial article on the topic in science fiction (e.g. Čapek’s 1921 play R.U.R.; Chu, 2010), at least one public-facing article (Modern Mechanix, 1957), and academic writing on legal rights for artificial entities (e.g. Lehman-Wilzig, 1981; LaChat, 1986).

The ‘10s also saw an increase in the prevalence of publications explicitly arguing against the moral consideration of artificial entities. There had been some earlier arguments to this effect (e.g. Drozdek, 1994; Birmingham, 2008), but mostly the idea had simply been ignored or marginalized, as in the early machine ethics and roboethics publications, rather than explicitly critiqued.[106]

Table 13: Recent contributions keyword searches

Keyword | Items mentioning | % of items |

Bryson | 52 | 19.5% |

Darling | 43 | 16.0% |

Danaher | 26 | 9.7% |

Richardson | 20 | 7.5% |

Schwitzgebel | 16 | 5.9% |

Research on AI rights and other moral consideration of artificial entities has received a number of thorough literature reviews (e.g. Gunkel, 2018; Harris & Anthis, 2021). Several papers have called for integration of the empirical research from HCI, HRI, and social psychology with moral questions relevant to AI rights (Vanman & Kappas, 2019; Harris & Anthis, 2021). Indeed, a number of empirical research projects have been inspired by or noted their relevance to ongoing ethical discussions (e.g. Spence et al., 2018; Lima et al., 2020; Küster et al., 2021). Other contributions have also explicitly sought to integrate seemingly disparate or conflicting strands of ethical and legal reasoning about the moral consideration of artificial entities (e.g. Gellers, 2020).

In the 21st century, there have also been a number of news stories relevant to AI rights, such as the 2006 paper commissioned by the UK “Horizon Scanning Centre” suggesting that robots could be granted rights in 20 to 50 years, South Korea’s proposed “robot ethics charter” in 2007, a 2017 European Parliament resolution that recommended the granting of legal status to “electronic persons,” and the granting of Saudi Arabian citizenship to the robot Sophia in 2017 (see Harris, 2021).

These events seem to have encouraged at least some academic discussion. Certainly, a number of authors mention them (see Table 14). Occasionally, authors explicitly cite these events as a motivation for their research or interest in the topic, such as Bennett and Daly (2020) framing their work as addressing the questions raised by the European Parliament Committee on Legal Affairs’ report. In other cases, the events may be one of several influences on the authors, or just a way to help justify their research as seeming current and important. For example, shortly after the 2006 report commissioned by the Horizon Scanning Centre, a symposium was organized on the question of “Robots & Rights: Will Artificial Intelligence Change The Meaning of Human Rights?” featuring talks on the moral status of artificial entities by Nick Bostrom and Steve Torrance (James & Scott, 2008). The opening sentence of the introduction to the symposium refers to the Horizon Scanning Centre report (James & Scott, 2008), though it does not explicitly claim that this was the key spark for the symposium to be organized.[107]

Table 14: News events keyword searches

Keyword | Items mentioning | % of items |

"European Parliament" | 30 | 11.1% |

Sophia | 30 | 11.1% |

"Robot ethics charter" | 6 | 2.2% |

"Horizon Scanning" | 5 | 1.9% |

Discussion

Why has interest in this topic grown substantially in recent years?

- Certain contributors may have inspired others to publish on the topic.

The “Results” section above identifies a handful of initial authors who seem to have played a key role in sparking discussion relevant to AI rights in each new stream of research, such as Floridi for information ethics, Bostrom for transhumanism, effective altruism, and longtermism, and Gunkel and Coeckelbergh for social-relational ethics. Perhaps, then, some of the subsequent contributors who cited these authors were encouraged to address the topic because those writings sparked their interest in AI rights, or the publication of those items reassured them that it was possible (and sufficiently academically respectable) to publish about it.

This seems especially plausible given that the beginnings of exponential growth some time between the late ‘90s and mid-’00s (Figure 1) coincides reasonably well with the first treatments of the topic by several streams of research (Figure 2). This hypothesis could be tested further through interviews with later contributors who cited those pioneering works. Of course, even if correct, this hypothetical answer to our question would then beg another question: why did those pioneering authors themselves begin to address the moral consideration of artificial entities? Again, interviews (this time with the pioneering authors) may be helpful for further exploration.

- The gradual, accumulating ubiquity of AI and robotic technology may have encouraged increased academic interest.

A common theme in the introductions of and justifications for relevant publications is that the number, technological sophistication, and social integration of robots, AIs, computers, and other artificial entities is increasing (e.g. Lehman-Wilzig, 1981; Willick, 1983; Hall, 2000; Bartneck et al., 2005b). Some of these contributors and others (e.g. Freitas, 1985; McNally & Inayatullah, 1988; Bostrom, 2014) have been motivated by predictions about further developments in these trends. We might therefore hypothesize that academic interest in the topic has been stimulated by ongoing developments in the underlying technology.

Indeed, bursts of technical publications on AI in the 1950s and ‘60s, artificial life in the ‘90s, and synthetic biology in the ‘00s seem to have sparked ethical discussions, where some of the contributors seem to have been largely unaware of previous, adjacent ethical discussions.[108]